We often see “point cloud” marks on 3D models. In fact, many people don’t know that there is actually a very important point in 3D space—that is, some objects that we cannot see with the naked eye correspond to these feature points. (such as faces, hand shapes, objects, etc. that cannot be recognized by the human eye), and part of the point cloud that will not be confused with the real world.

What does 3d point cloud annotation do?

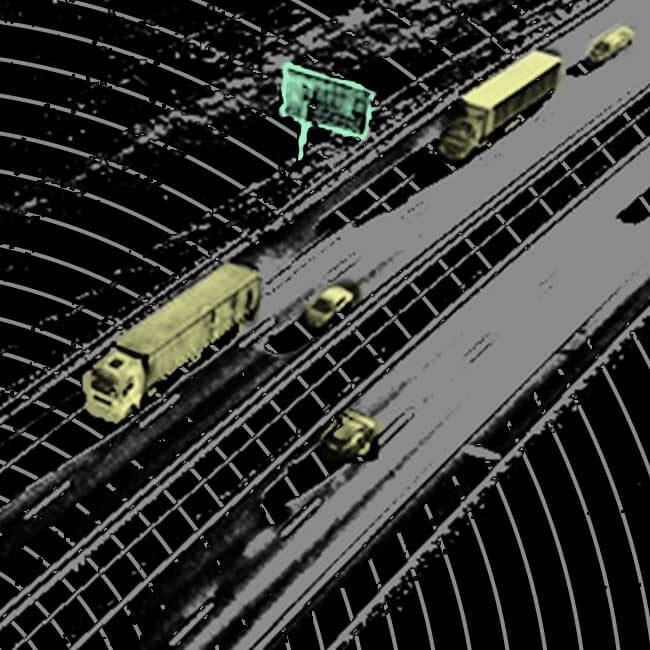

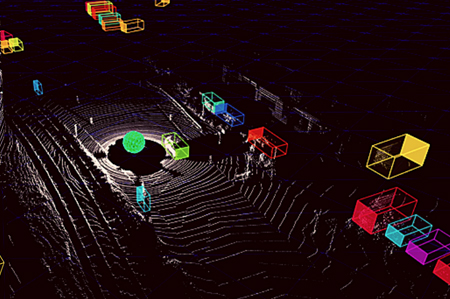

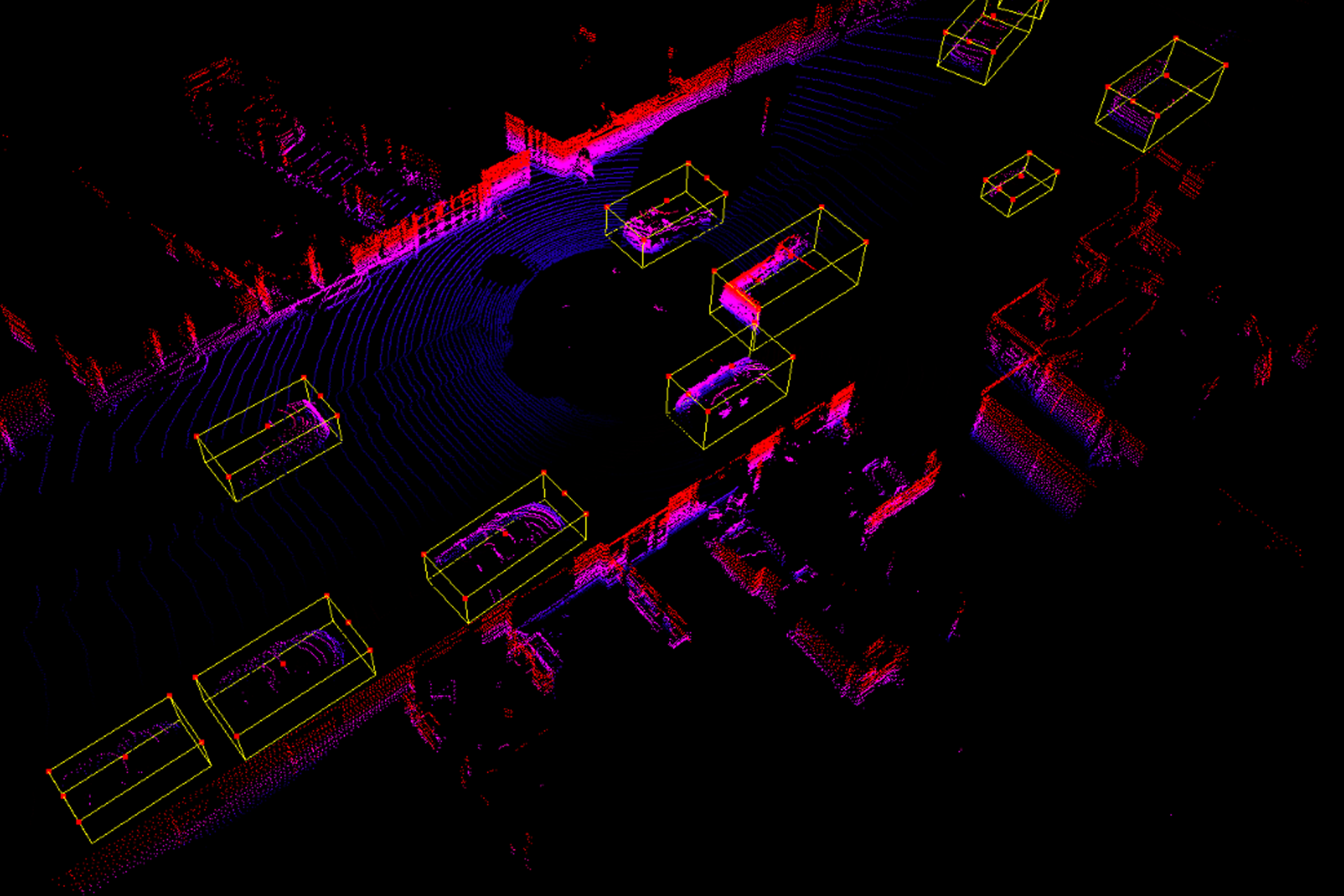

We can see that these annotations are marked with many small black dots, and these small black dots are the 3D point cloud model marks. And we use lidar to collect 3D modeling, which is to accurately calibrate the 3D point cloud to obtain the final point cloud result. These 3D point cloud annotation data can be processed and analyzed by computer to provide us with an accurate and clear 3D model, 3D data and various attribute information. In addition, it can also be applied to the training and use of artificial intelligence models such as computer vision and unmanned driving.

Point cloud data processing and modeling:

1. Point cloud modeling and 3D modeling

First of all, we need to understand what a point cloud is. Generally speaking, point cloud is obtained by surveyors using specialized equipment and technology to scan the entire space. And our common ones are laser radar (LIDAR), total station (POP), etc., which all belong to point cloud scanning equipment.

Three-dimensional modeling refers to the automatic generation of a three-dimensional solid model through a computer program, which usually includes two parts: the surface of the model and the internal features. The 3D solid model (3D solid data points, also known as feature points) is determined by the scanned data points, and the final 3D solid model uses these scanned points and corresponding attribute data (such as height, width , depth, etc.) established. Both devices, what we call LIDAR and total stations, can be used for modeling operations in many cases.

2. Point cloud fine processing and 3D data visualization

Before point cloud processing, we need to classify the point cloud to facilitate subsequent processing. For example, we can perform further processing based on the color of these point clouds (point cloud color, grayscale or pixel value) and shape (such as circles, triangles, and irregular shapes).

(1) Point cloud grayscale, which is the most common and popular processing method in the field of computer vision. Convert the point cloud grayscale to RGB color to meet the visual aesthetic needs of most people.

(2) Point cloud line surfaceization, that is, through the statistical analysis of a large amount of data on a large number of actual scenes, and then convert the point cloud curve and area into a digital matrix to calculate the pixel value of each object.

(3) Shape characterization, which is to extract the shapes of objects in a large number of actual scenes, and then calculate the corresponding data after calculating the similarity in their mathematical morphology.

(4) The last step is visualization processing: that is, the digital matrix is used as the input source for data visualization processing, and corresponding visualization charts are generated on this basis.

3. Point cloud database and 3D simulation model

According to actual needs, we can scan the point clouds extracted from objects and 3D models in the real world through a 3D laser scanner to form a corresponding 3D laser point cloud library, and then use the 3D laser point cloud library to perform real-time modeling. We can see that the whole process is like this: in the modeling stage, we only need to scan an object from top to bottom, and only scan this layer.

After scanning the layer with a 3D laser scanner, and marking it (which can be understood as a small black spot on the point cloud model), the corresponding point cloud data can be obtained. In the final simulation model making stage, point clouds can be used to create virtual scenes such as object models, road models, and environments.