Find the Best Dataset in Machine Learning

While looking for a dataset it is useful to initially ponder who could gather the information you are keen on. For instance, might it be accumulated by an administration office; a not-for-profit or nongovernmental association or organization; or different specialists? You can then really take a look at the sites of likely information gatherers.

Likewise, you can search for distributions, articles, or government reports, that refer to the information, could you at any point tell where they procured the dataset?

You can find datasets accessible on the web by looking through Google or Open Information Vaults. Likewise, the library might approach the information as a feature of one of our memberships. At long last, you can have a go at messaging a solicitation for the information from the creators or specialists.

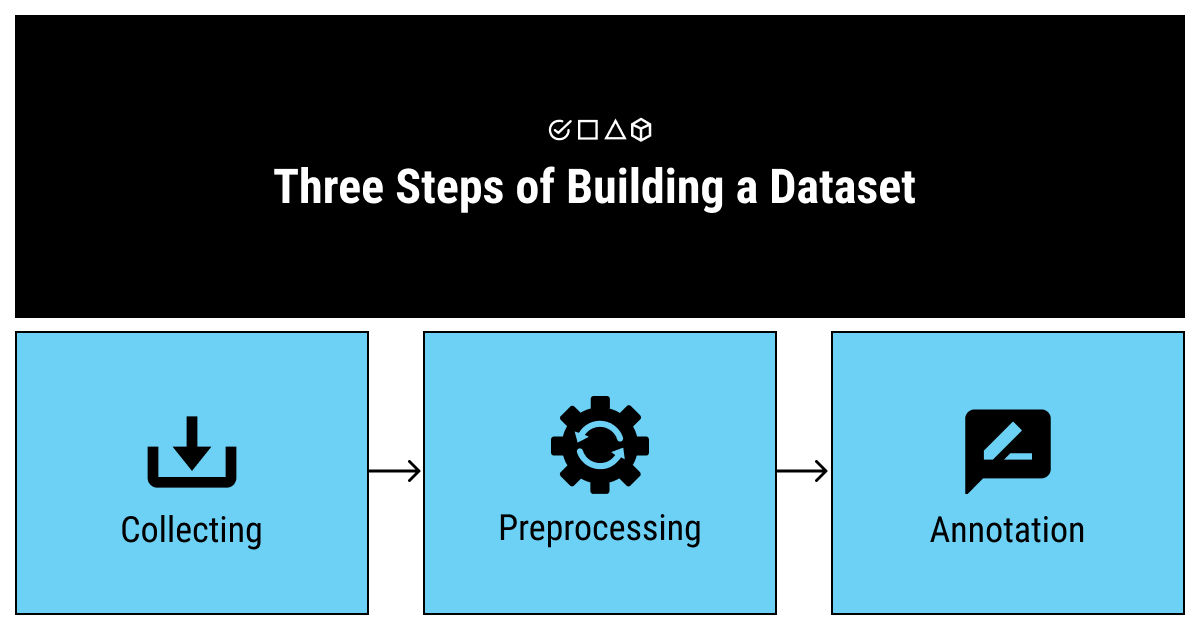

Steps to Constructing Your Dataset

To construct your dataset (and before doing data transformation), you should:

- Collect the raw data.

- Identify features and label sources.

- Select a sampling strategy.

- Split the data.

These steps depend a lot on how you’ve framed your ML problem. Use the self-check below to refresh your memory about problem framing and to check your assumptions about data collection.

Major Academic Repositories

-

Dryad Digital Repository

Dryad is a nonprofit membership organization that is committed to making data available for research and educational reuse now and into the future. -

Harvard Dataverse

A free data repository open to all researchers from any discipline, both inside and outside of the Harvard community, where you can share, archive, cite, access, and explore research data. (Includes the data from other Dataverse repositories, e.g. UCSD)

Additional Open Data Repositories

-

Figshare

Figshare is a repository where users can make all of their research outputs available in a citable, shareable, and discoverable manner. -

ICPSR (endorsed by APA)

ICPSR maintains a data archive of more than 250,000 files of research in the social and behavioral sciences. -

Registry of Research Data Repositories

Offers a way to search over 2,000 worldwide data repositories.

Local datasets

-

City of Seattle

The Open Data Program makes the data generated by the City of Seattle openly available to the public to increase the quality of life for our residents; increasing transparency, accountability, and comparability; promoting economic development and research; and improving internal performance management. -

King County

Welcome to King County’s Open Data portal, where you can discover, analyze, and connect with publicly available datasets published by King County agencies.

Additional datasets recommended by SPU faculty

-

Iris Data Set

The UCI Machine Learning Repository is a collection of databases, domain theories, and data generators that are used by the machine learning community for the empirical analysis of machine learning algorithms.

The Iris Data Set contains three classes of 50 instances each, where each class refers to a type of iris plant. -

Kaggle

Inside Kaggle you’ll find all the code & data you need to do your data science work. Use over 50,000 public datasets and 400,000 public notebooks to conquer any analysis in no time. -

Kaggle – World Happiness Data

This is a classic dataset for analysis. Over several years, it looks at national happiness and other data by country. It contains categorical and continuous data and has data nested within countries over time. -

Linear Regression Data Sets for Machine Learning

Here there are open linear regression datasets for free downloading. Some of the datasets include sample regression tasks to complete with the data. -

Project Implicit – Open Data for Implicit Racial Bias and More

Project Implicit was founded to foster the dissemination and application of implicit social cognition. The site contains many gigabytes of datasets looking at IAT data in psychology (fast, automatically learned associations with race, gender, etc.) and is an important repository of datasets for research in psychology.

Should I Trust This Data Source?

First, consider the overall reputation of the source for your data. At the end of the day, datasets are created by humans, and those humans may have specific agendas or biases that can translate through your work.

All the data sources we’ve listed here are reputable, but there are more sources for data that aren’t as reputable. The one caveat to our list here is that community-contributed collections, like data. world or GitHub, may vary in their quality. When in doubt about your data source’s reputation, compare it to similar sources that cover the same topic.

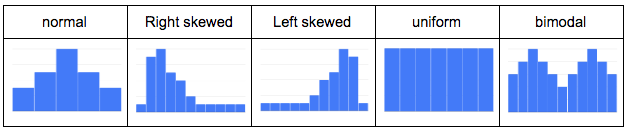

Is the Data Skewed?

Understanding how your dataset skews will help you choose the right data to analyze. It’s good to use visualizations to see how your dataset skews because it’s not always obvious by looking at the numbers alone.

For numeric columns, use a histogram to see which type of distribution each column has (normal, left, right, uniform, bimodal, etc.). Here’s a quick visualization of a few distributions you might see:

This visual understanding will help you avoid outliers and be aware of general trends as you perform your data analysis. Strict recommendations on what to do next depend on your dataset, but, overall, how it skews will provide a general idea of the data’s quality and hint at which columns to use in an analysis. You can then use this general idea to avoid misrepresenting your data.

For non-numeric columns, use a frequency table to check how many times a value appears. In particular, you might check if there is mostly one value present. If that’s the case, your analysis might be limited because the variety of values is small. Again, this is just to provide a general idea of data quality and indicate which relevant columns to use.

You can create these visuals and frequency tables within Excel or Google Sheets using CSVs, but you might want to look at a business intelligence (BI) tool for complex datasets.

Start Using Your Free Datasets

Once you have your data and are confident of its quality, it’s time to put it to work. You can go a long way with the likes of Excel, Google Sheets, and Google Data Studio, but if you want good practice for your data career, you should cut your teeth with the real deal: a BI platform like Chartio.

A BI platform will provide powerful data visualization capabilities for any dataset, from small CSVs to large datasets hosted in data warehouses, like Google BigQuery or Amazon Redshift. You can play with your data to create dashboards and even collaborate with others.